Exploring Real-Time Characters and Virtual Production Workflows

Unreal Engine 5

EXPERIMENTAL NARRATIVE AND CONCEPT

Drew Campbell

2/5/20264 min read

Exploring Real-Time Characters and

Virtual Production Workflows

A key theme emerging in the Experimental Narrative and Concept module is the rapid evolution of technologies changing how animation and VFX stories are produced. Rather than simply following a traditional pipeline, this module encourages experimentation with emerging tools and hybrid workflows. The goal is not just to replicate industry practice, but to explore how technology might reshape storytelling and audience experience.

During this session, we explored recent developments in Unreal Engine and MetaHuman technology, particularly updates arriving with Unreal Engine 5.6. What stood out immediately was how much the workflow is shifting toward real-time production and faster iteration.

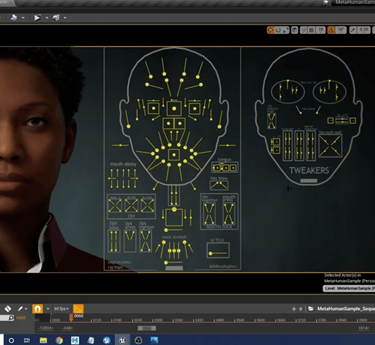

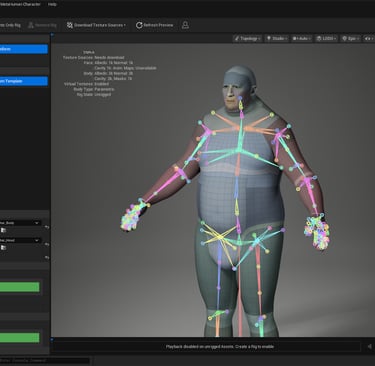

MetaHuman Creator Inside Unreal

One of the biggest changes is that MetaHuman Creator is now integrated directly into Unreal Engine, rather than existing purely as a web-based tool. Previously, characters were created online and then exported to Unreal, adding extra steps to the process. Integrating the system directly inside the editor allows character creation and iteration to happen much more fluidly within a project.

Another significant improvement is the use of cloud-assisted processing. While the artist works locally, heavy tasks such as rig assembly and texture preparation can be handled through Epic’s cloud services. As demonstrated in the presentation, character assembly, which previously took 15 to 20 minutes, can now take only a few minutes. From a production perspective, this dramatically speeds up experimentation and iteration.

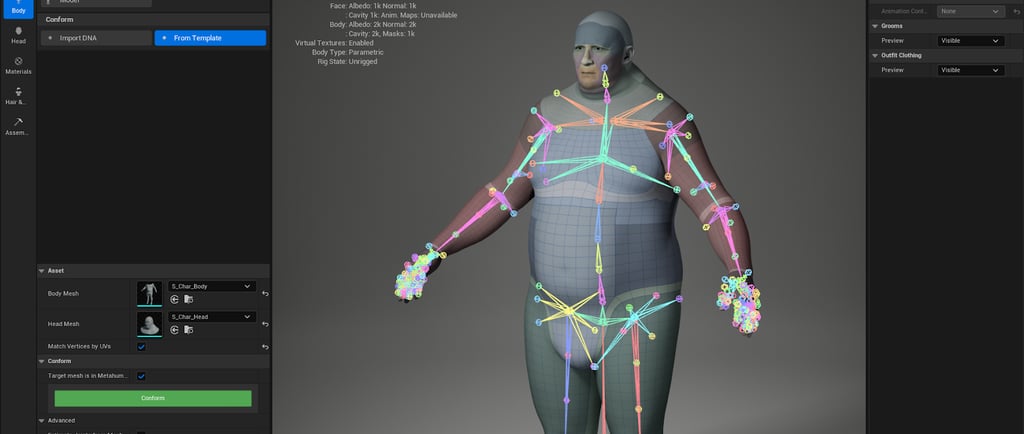

The system also introduces a parametric body tool that allows characters to be adjusted using real-world measurements such as height, shoulder width, and muscle mass. Clothing systems automatically adapt to these body changes in real time, removing the need to rebuild multiple body variants for different outfits manually.

What interests me about this development is how it lowers the technical barrier to producing believable digital characters. This allows creators to focus more on performance and storytelling rather than spending excessive time on technical setup.

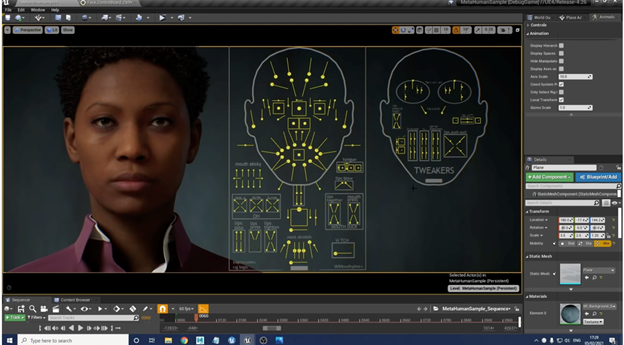

Real-Time Facial Animation

Another demonstration focused on MetaHuman Animator, which enables high-fidelity facial animation driven by standard video footage. The system can capture performance data from relatively simple sources, including a consumer webcam, without requiring traditional facial-tracking markers.

This introduces an interesting creative possibility. Because performances can be captured in real time, spontaneous moments or “happy accidents” can become part of the animation process. These unscripted performances can sometimes feel more natural than carefully keyframed animation.

In practical terms, this means digital characters can be performed almost as if they were live actors, allowing the performer’s expression and timing to drive the animation

Planning a Virtual Production Scene

For the practical task in this module, we are asked to build a virtual environment in Unreal Engine and integrate live-action footage filmed against a green- or blue-screen. The suggested duration for the scene is around twenty to forty seconds, which encourages a concise idea that demonstrates the technology while remaining manageable.

A key lesson from the session was the importance of planning the Unreal environment before filming. In traditional workflows, footage is often shot first, and digital elements are matched in post-production. Virtual production reverses this process: the digital environment is built first, and the real-world lighting and camera setup are adjusted to match it.

Several planning steps were recommended before building the scene:

reference imagery and lighting inspiration

simple layout and blocking

storyboard or concept sketches

an asset list for props and environment elements

matching the scale of the digital environment to the physical studio space

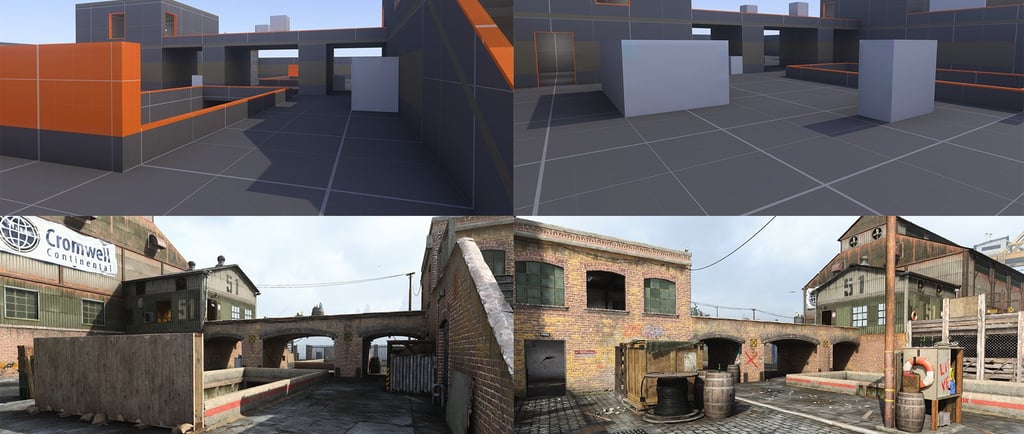

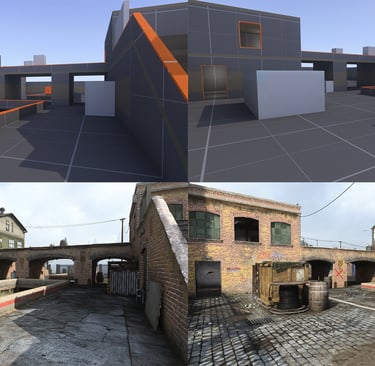

Starting with a simple grey block-out version of the set allows camera placement and framing to be tested before adding detailed assets.

Real-Time Optimisation and Lighting

Because Unreal renders in real time, optimisation is an important consideration. For example, background objects often do not require extremely high-resolution textures, particularly if they will appear blurred through depth of field. Using lower-resolution textures in distant areas helps maintain performance.

Depth is also important for cinematic composition. Building foreground, midground, and background elements helps create parallax when the camera moves, enhancing the sense of space.

Lighting should also be intentional rather than random. Treating lighting as a way to “paint with light” allows shadows and highlights to guide the viewer’s attention while maintaining detail in both bright and dark areas.

When filming green screen footage, the most important principle is to separate the screen's lighting from the performer's lighting. Evenly lighting the screen creates a clean key, while lighting the subject independently allows them to match the environment of the virtual scene.

Reflection

What stood out most from this session is how tools like Unreal Engine and MetaHuman are blurring the line between filmmaking and real-time game technology. Performance, digital environments, and animation can now be combined in ways that were previously far more complex.

For this module in particular, the emphasis is on experimentation rather than polish, encouraging exploration of how emerging tools might support new forms of storytelling.

References

Epic Games (2024) MetaHuman 5.7 release notes. Available at: https://dev.epicgames.com/documentation/en-us/metahuman/metahuman-5-7-release-notes (Accessed: 5 March 2026).

GfxSpeak (2021) Unreal getting MetaHumans. Available at: https://gfxspeak.com/featured/unreal-getting-metahumans/ (Accessed: 5 March 2026).

Level Design Book (n.d.) Blockout. Available at: https://book.leveldesignbook.com/process/blockout (Accessed: 5 March 2026).

Fig. 1: MetaHuman face animation (Epic Games, 2024)

Fig. 2: MetaHuman Interface (GfxSpeak, 2021)

Fig. 3: Unreal Engine greybox environment blockout (Level Design Book (n.d.)

Fig. 3: Lighting, Camera, greenscreen (Epic Games, 2024)

Business address: Voice of Drew, Carlisle, CA2 6ER | UTR: 7259771174 Copyright Drew Campbell 2024